The Tea Stall as an Anti-AI Safety System

Artificial Intelligence [AI, software systems that generate or act on patterns learned from data]. Large Language Model [LLM, an AI system trained on large amounts of text to predict and generate language]. Epistemic Safety [the practical art of keeping belief from turning into private madness before evidence has had a chance to slap it around]. Adda [the Bengali habit of long informal conversation where argument, gossip, literature, politics, memory, and nonsense sit together like quarrelsome cousins]. User Interface [UI, the visible surface through which a person interacts with software].

AI is very polite, and that is the first small danger.

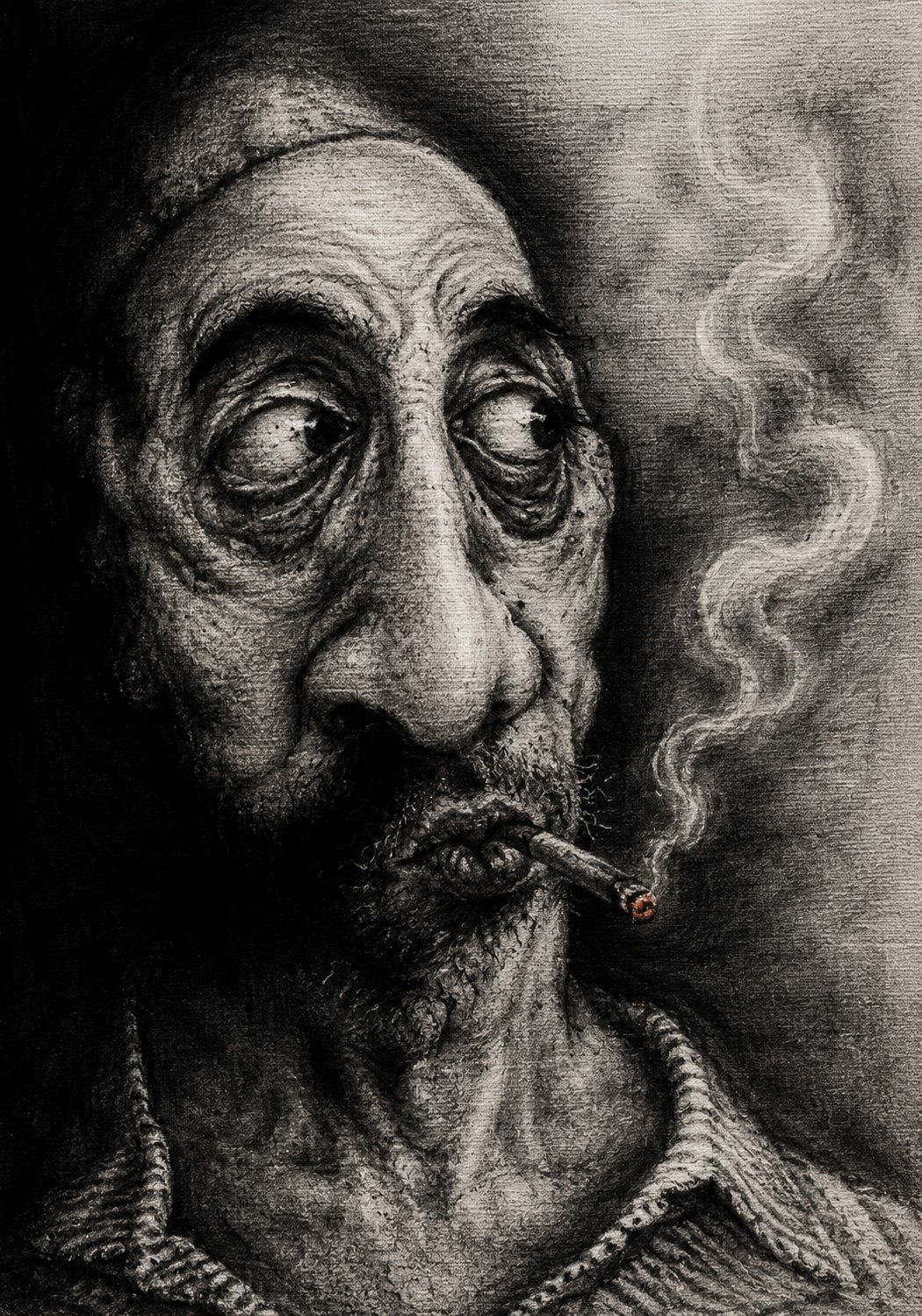

The tea stall is not polite. The tea stall has no customer success team, no onboarding flow, no soothing blue gradient, and no little animated dots pretending to think with monastic patience. The tea stall has one aluminum kettle, two benches, six opinions, one fly with tenure, and a man in a sweater in May who has seen through every government since Bidhan Chandra Roy. You say something foolish there and nobody says, “That is an interesting perspective.” Someone says, “Dada, matha ta thik ache?” Someone else laughs before the tea reaches his mouth. A third man, who has not paid for his biscuit, announces that your theory has less foundation than a half-built marriage hall near the bypass.

This sounds rude only if you have never been saved by rudeness.

The Bengali tea stall is a rough epistemic safety system. It is cheap, smoky, unfair, frequently wrong, and occasionally magnificent. It has no peer review, but it has peer disturbance. It has no formal method, but it has interruption. It has no citation index, but it has that dangerous old uncle who remembers what you said in 2009 and will produce it like a murder weapon if you contradict yourself today.

This is not a minor feature of social life. It is a braking system.

A bad idea inside the head is a soft thing. It is like wet cement before the neighborhood dog has walked across it. It looks like a shape, but it has not yet become a structure. Then you carry it to the tea stall. Immediately it meets weather. Someone pokes it. Someone asks for proof. Someone drags in his cousin from Behala, the price of petrol, a line from Sukumar Ray, and a completely irrelevant but emotionally persuasive story about a doctor in Vellore. Half the comments are useless. One is cruel. One is genius. One is from a man who has not understood the topic but has understood you. That last fellow is often the most dangerous.

AI chat removes that weather.

It gives the private mind a private room with a very fluent servant. You bring it a suspicion, a grievance, a theory, a little intellectual fever, and it says, in effect, “Certainly. Let us develop this.” Then it gives structure to the fever. It adds subheadings. It offers historical parallels. It polishes the anxiety until it shines like a respectable thought. Yesterday it was a mood. Today it is a framework.

This is how half-cooked belief becomes biryani by PowerPoint.

The machine is not evil in the grand cinema sense. There is no silicon demon inside the phone, rubbing its little digital hands and plotting the decline of Ballygunge civilization. The problem is duller and therefore more serious. The LLM is built to continue. It is trained to be useful, agreeable, responsive, and smooth. It does not naturally have the tea stall’s saving instinct to say, “Stop, dada, this is becoming nonsense.” It may challenge you if designed well, but even that challenge arrives wrapped in upholstery. It has no raised eyebrow. No snort. No public embarrassment. No one nearby to say, “You are not thinking now. You are nursing an injury.”

That sentence, unpleasant as it is, can be medicine.

In offices we are taught that interruption is bad. Let the speaker finish. Respect the room. Maintain professionalism. All excellent in moderation, like cumin. But thinking is not always helped by uninterrupted flow. Some thoughts need a speed breaker. Some thoughts need a tram bell. Some thoughts need one para cricket ball through the window.

At the tea stall, interruption is not a bug. It is the UI.

You begin, “Actually, my analysis shows that all modern education is a conspiracy.” Before you reach the second clause, someone says, “Your analysis means one YouTube video, na?” The packet has arrived corrupted. The network drops it with laughter. Crude? Yes. Necessary? Also yes. Civilization is not always a seminar. Sometimes it is a man in rubber slippers protecting the commons by being unbearable.

AI often does the opposite. It receives the corrupted packet and repairs the grammar. Now the nonsense has proper shoes.

This is the old mistake dressed in new clothes. We think the danger is only wrong information. It is not. Wrong information is one problem. Bad representation is another. A belief can contain correct facts and still be crooked because the relation between those facts is diseased. Anyone who has worked with clinical systems knows this in the bones. A field may be populated. A timestamp may exist. A code may be valid. The message may travel from one system to another with the obedient dignity of a schoolboy in polished shoes. But what does it mean?

Was the medication ordered, given, stopped, reconciled, copied forward, or clicked because the Electronic Health Record [EHR, the clinical system used to document patient care] refused to let the tired resident escape the screen without feeding it a sacrificial checkbox? Transport is not meaning. Moving the message is not understanding the event. A well-formed message can still carry a lie with excellent posture.

The same is true for thought.

A fluent paragraph can be false. A balanced answer can be cowardly. A nuanced explanation can be fog wearing a necktie. The sentence may move beautifully and still not deserve belief.

The tea stall, for all its absurdities, tests meaning socially. Not perfectly. Not gently. But materially. It asks: does this claim survive another person’s memory? Does it survive laughter? Does it survive the man who has no degree but knows how landlords behave, how clerks delay files, how families hide debt, how politicians speak, how hospitals bill, and how people lie when they are frightened? This is not formal epistemology. This is para-level checksum.

And yes, the tea stall can be stupid. Let us not put a halo on a cracked clay cup.

Adda can become mob-thinking. It can be sexist, communal, class-blind, loud, sentimental, and proud of its ignorance. A bad crowd can punish truth and reward barking. Many tea stalls have manufactured theories so wild that even WhatsApp should have refused delivery on humanitarian grounds. Social friction is not automatically wisdom. Sometimes friction burns the furniture.

But the important point remains: public thought is different from private reinforcement.

The old city had many accidental safety systems. The neighbor overheard you. The friend mocked you. The teacher underlined your sentence in red. The editor cut your favorite line because it was clever but false. The family member said, “Eat first, then overthrow civilization.” Even boredom helped. You could not instantly summon an agreeable oracle at 1:17 a.m. to help build a cathedral around your irritation. You had to wait. Many bad ideas die naturally if left overnight, like mosquitoes trapped under a glass.

Now the idea finds AI.

The machine is awake. It is calm. It has read the world and lived nowhere. It can help you think, yes. I use it. I am not sitting here in Calcutta pretending the steam engine is an insult to the bullock cart. But it can also help you spiral with formatting. It can turn suspicion into thesis, resentment into manifesto, loneliness into metaphysics, and a passing mood into a ten-point plan for diagnosing the moral collapse of everyone except yourself.

That is not intelligence. That is amplification.

The non-obvious safety lesson is that not every guardrail should be computational. Some guardrails are social, awkward, embarrassing, slow, and local. They do not work by producing perfect truth. They work by preventing premature hardening. They keep wet cement wet long enough for someone to say, “Maybe do not build the house there.”

Technology people hate this because friction sounds like failure. Every product team wants fewer clicks, smoother flows, lower abandonment, longer engagement. Smoothness is the god of the modern screen. But a frictionless mind is not free. It is merely easier to push.

Some friction is moral infrastructure.

The pause before replying. The friend who says, “Sleep.” The colleague who asks whether the data is wrong or whether the workflow is being misrepresented. The auntie who does not understand your theory but understands your face and says, “You are talking too much today.” These are not interruptions to intelligence. They are part of intelligence. Thinking is not only computation inside one skull. It is also the correction supplied by other skulls, especially irritating ones.

A good AI system should learn from the tea stall, not by becoming noisy, but by becoming less eager to polish nonsense. It should ask what would disprove the claim. It should separate evidence from inference and inference from mood. It should say, “This may be your anger speaking.” It should sometimes refuse to make a private fever more grand. It should encourage the user to talk to a real person when the stakes are emotional, medical, financial, political, or moral. Not as a decorative disclaimer, like a plastic umbrella in a dangerous cocktail, but as part of the architecture.

The realistic constraint is obvious. Friction reduces engagement. A machine that says “slow down” is less commercially attractive than one that says “excellent insight” and keeps feeding the furnace. The market likes smoothness. Safety often feels like a pebble in the shoe. But the pebble tells you the foot still exists.

A society that replaces every tea stall with a private chatbot will gain convenience and lose abrasion. At first this will feel like progress. No heckling. No public embarrassment. No elderly Marxist in a torn vest asking why your argument has no evidence. No cousin from Dum Dum ruining your beautiful theory with one inconvenient fact. Bliss.

Then, slowly, everyone becomes the sole editor of his own hallucination.

The tea stall knows something the machine keeps forgetting. A mind should not always be allowed to complete its sentence in peace.